This is the engineering companion to our piece on The Business Case for Cardiac AI Diagnostics. If you're a CEO, head of product, or founder scoping a cardiac AI program at the strategic level, markets, build/buy/partner, regulatory cost, reimbursement, start there. This article is for the ML, clinical engineering, and regulatory leads who have to actually build the thing.

The article scopes specifically to 12-lead ECG interpretation systems, intended for clinician decision support, regulated as Class IIa devices under EU MDR. Single-lead wearables (Apple Watch, KardiaMobile-class) and pediatric ECG interpretation are different problems with different data, regulatory, and clinical profiles, and are not covered here.

What follows reflects the experience of designing and building KARDI AI's cardiac diagnostic, from first model training runs to CE-marked device, not a literature review.

Why ECG Interpretation Is a Harder ML Problem Than It Looks

Cardiac AI is one of the most mature sub-fields in clinical AI, with public datasets (PTB-XL contains 21,837 records), well-defined classification targets, and a decade of published research showing near cardiologist-level performance on specific tasks. On benchmarks, the problem looks tractable.

In clinical deployment it isn't. The gap between benchmark performance and real-world reliability in cardiac AI is unusually wide, wider than in radiology AI, which benefits from more standardised acquisition, and wider than in dermatology AI, which has fewer high-consequence diagnoses. Three structural reasons:

Acquisition variability. ECG signals depend on equipment, electrode placement, skin impedance, patient movement, and ambient electromagnetic noise. Models trained primarily on signals from one manufacturer's system don't perform as well on other devices from another manufacturer. Public datasets help less than they appear to because most are dominated by a small number of acquisition contexts. A device intended for use across diverse clinical settings needs training and validation data covering the acquisition variability it will actually encounter, which is rarely achievable from public data alone. Concretely, training and fine tuning models for KARDI AI required hundreds of thousands of proprietary ECGs from clinical partners across multiple equipment vendors and care settings.

Rare but critical conditions. The conditions where cardiac AI is most clinically valuable, STEMI (ST-elevation myocardial infarction), complete heart block, life-threatening arrhythmias, are also the least represented in most training datasets. Public datasets like PTB-XL contain relatively few STEMI cases compared to the more common findings that dominate. A model with high overall accuracy can have poor sensitivity on exactly the conditions where a missed diagnosis is most clinically severe. Building for clinical use requires explicit handling of rare-but-critical conditions through targeted data collection, oversampling, or class-weighted training and validating performance per condition, not just on overall accuracy.

Normal ECG variability. The space of "normal" is larger than most benchmarks capture. Age-related changes, sex differences (women have shorter QT intervals on average), athlete's heart morphology, and chronic-medication artifacts all produce patterns that look abnormal to a model that hasn't seen enough normal variation. Elevated false positives in clinical deployment generate alert fatigue and erode clinical trust faster than almost any other failure mode — including, often, missed positives. Once a clinician learns the system flags too aggressively, they tune it out.

The Label Quality Problem That Most Teams Don't Address

Before model architecture, before preprocessing, the question is: what are you training against? Cardiologist labels are the standard ground truth for ECG AI. They are also famously inconsistent. Inter-rater reliability among cardiologists on common ECG findings sits at kappa values of roughly 0.5 – 0.7, meaning two cardiologists disagree on the same ECG more often than buyers of cardiac AI systems would assume.

This has three concrete implications. First, a model can't be more accurate than its labels. If 15% of training labels are genuinely wrong (or are reasonable disagreements treated as correct), achieving 95% benchmark accuracy mostly means you've fitted the noise. Second, "cardiologist-level performance" is a lower bar than the marketing implies. It means matching the average of a noisy reference standard, not matching ground truth. Third, building serious clinical AI requires a labelling pipeline that addresses this: multi-rater consensus on training labels, separate adjudicated test sets where ground truth is clinically confirmed (downstream cath lab, echocardiogram, outcome data), and explicit reporting of rater disagreement rates in the regulatory submission.

This is one of the most underweighted parts of building cardiac AI, and it is upstream of everything else.

The Model Architecture Decision

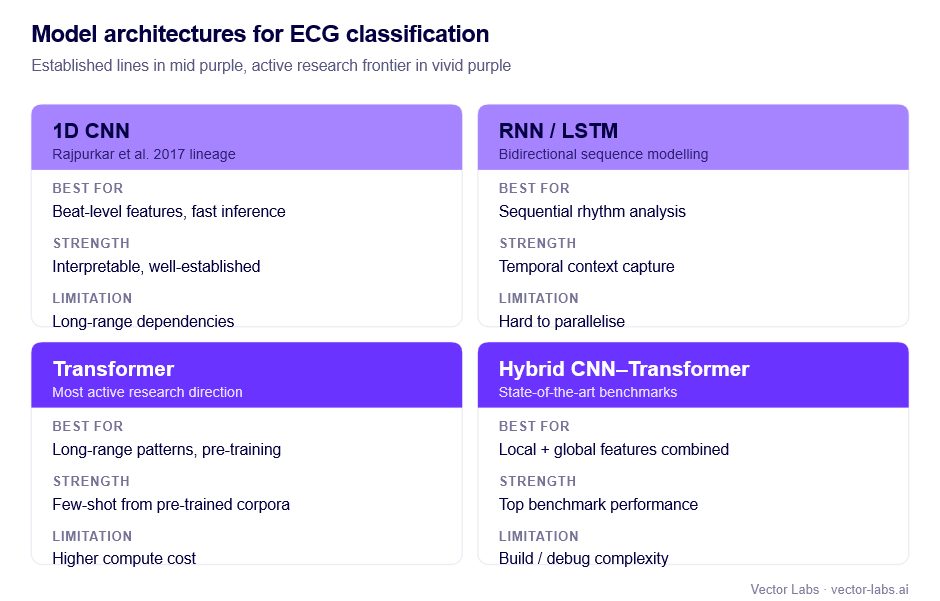

The dominant architectures for ECG classification:

1D Convolutional Neural Networks (CNNs). The original deep learning approach for ECG interpretation, established by Rajpurkar et al. (2017) at Stanford. 1D CNNs apply convolutional filters across the time axis, learning features at multiple temporal scales — from individual QRS morphology to rhythm patterns. Fast, computationally efficient, interpretable at the feature level. Primary limitation: limited modelling of long-range dependencies.

Recurrent Neural Networks (RNNs/LSTMs). Better at temporal dependencies than pure CNNs. Bidirectional LSTMs process the signal in both directions, useful when context from later in the recording informs interpretation of earlier segments. More expensive than CNNs and harder to parallelise. Often used as a component in hybrid architectures.

Transformer architectures. Self-attention handles long-range dependencies in ECG well the relationship between P wave and QRS complex, or between beat morphology and rhythm regularity, can be captured without explicit feature engineering. Pre-trained ECG transformers (analogous to BERT for text) trained on large unlabelled corpora can be fine-tuned with significantly fewer labelled examples than training from scratch. This is the architecture direction with the most active research momentum as of 2025–26.

Hybrid CNN–Transformer architectures. Combining a CNN front-end (for local feature extraction from individual beats) with an attention-based back-end (for temporal and rhythm-level patterns) often outperforms either alone. The trade-off is complexity in build, debug, and validation cycles.

The clinical-grade consideration is upstream of architecture choice. More complex models are harder to explain to clinicians and regulators, and explainability, while not a hard regulatory requirement at Class IIa, is a practical requirement for clinical adoption. Architectures that produce attention maps or feature attribution (per-beat importance, lead-level contribution, segment-level highlights) make it dramatically easier to satisfy the transparency expectations that clinicians and notified bodies will probe for. A model that's marginally more accurate but architecturally opaque is often a worse business outcome than a model that's slightly less accurate but explainable.

Signal Preprocessing: Where Most Teams Underinvest

Preprocessing quality has a larger impact on final model performance than the choice of model architecture. This is the most counterintuitive engineering finding in cardiac AI, and the one most consistently missed by teams that optimise the model first.

Baseline wander removal. DC offset and low-frequency baseline drift (from breathing, electrode movement) must be removed before analysis. High-pass or median filtering approaches remove baseline wander while preserving clinically relevant ST segment changes. Aggressive filtering that strips ST segment information is a common error that destroys ischaemia detection performance, one of the few preprocessing mistakes that is also a clinical safety issue.

Powerline interference removal. 50Hz (EU) or 60Hz (US) powerline interference is a near-universal artifact. Notch filtering at the powerline frequency is standard. The trade-off is that notch filtering slightly distorts signal morphology. Train-deploy preprocessing mismatch (training on filtered signals, deploying in a different interference environment) adds noise to inputs that's avoidable with strict pipeline versioning.

Resampling. Different ECG systems acquire at different sampling rates (typically 250–1000Hz). Models trained at one rate must be evaluated at that rate, or signals resampled to it. Obvious in principle, missed in multi-site evaluations more often than expected.

Lead verification and graceful degradation. A 12-lead ECG assumes all 12 leads are properly acquired. In clinical practice, lead detachment, poor skin contact, and patient movement produce missing or unreliable leads. A clinical-grade system needs an explicit policy: exclude the recording from analysis (with appropriate documentation in the IFU), or degrade gracefully to a lead-reduced analysis with explicit uncertainty signalling. Silent failure on unreliable leads is both a regulatory and a patient safety issue.

Normalisation. ECG amplitude varies significantly between patients. Lead-level normalisation, per-recording standardisation, or amplitude augmentation in training are all valid. Inconsistent normalisation between training and deployment is a source of performance degradation that is fully avoidable with a documented, version-controlled preprocessing pipeline.

The Multi-Label Problem

Most clinically relevant ECG analysis is multi-label: a single ECG may simultaneously exhibit atrial fibrillation, left bundle branch block, and ST depression. The sicker patients who most benefit from AI triage are the ones most likely to have multiple co-occurring findings.

Single-label classification mishandles this. Multi-label classification, independent prediction of presence/absence of each condition, is the correct formulation, and it raises specific challenges.

Label correlations. Some conditions co-occur (left bundle branch block and heart failure). Others are mutually exclusive (sinus rhythm and atrial fibrillation). Architectures that treat each condition fully independently miss this structure; architectures that explicitly model correlations are more complex but better-calibrated.

Condition-specific thresholds. Multi-label models produce a probability per condition. The conversion threshold should be condition-specific, set by the clinical cost of false negatives versus false positives for that finding. Low threshold for STEMI (catch every one, accept some false positives). Higher threshold for low-acuity findings where false positives cost more than false negatives. Threshold selection must be documented, clinically justified, and validated on held-out data, not chosen on the same data the model was trained on. The technical file needs to show threshold selection methodology, not just the resulting numbers.

Class imbalance per condition. The training set will have very different prevalence rates per condition. STEMI and complete heart block need targeted handling: oversampling, class weighting, or augmentation, or the model will collapse to majority-class predictions on exactly the conditions that matter most.

Conformal prediction and set-valued outputs. Worth considering for 2025–26 deployments: rather than emitting point probabilities per condition, conformal methods emit prediction sets with guaranteed coverage rates. For clinical AI where defensible uncertainty bounds are needed, conformal prediction gives a statistically rigorous answer to "how confident should the clinician be?" and the framework is starting to receive regulatory attention as a path to formalising uncertainty quantification.

Bias, Subgroup Performance, and Validation

Performance across demographic and clinical subgroups is increasingly a gating regulatory and reputational issue. Validation reporting that aggregates over an entire test set without subgroup breakdown is becoming an unacceptable submission.

For cardiac AI, the subgroups that materially affect performance are sex (QT interval differences, body habitus differences in lead positioning), age (paediatric ECG is essentially a different problem and most adult systems should explicitly exclude paediatric use; geriatric ECG has higher rates of conduction abnormalities baseline), ethnicity (some morphology variants have ethnic prevalence patterns that map onto training data imbalance), and clinical setting (ICU, post-surgical, and ambulatory patients all produce different signal characteristics).

A serious validation reports performance per subgroup, with confidence intervals, and explicitly documents the subgroups for which the model is and is not validated. Excluding a subgroup is a legitimate strategic choice; failing to disclose the exclusion is not.

Confidence Calibration

The model produces a probability score. For clinical use, the score must mean something. А 0.8 should correspond to roughly 80% empirical accuracy, not just maximal model confidence.

Most deep learning models are poorly calibrated out of the box. Temperature scaling, a single learned scaling parameter applied to output logits, fitted on a held-out calibration set, is the standard post-hoc fix. Well-calibrated scores enable clinically meaningful uncertainty thresholds (immediate review above 0.9; routine review 0.5 – 0.9; deprioritise below 0.5), honest communication to clinicians about model confidence per prediction, and post-market monitoring (declining average confidence is an early signal of distributional shift).

For Class IIa MDR submission, calibration analysis on the validation set should be documented as part of the performance evidence.

Locked vs. Learning Models, and the PCCP Question

The first regulatory question a sophisticated reviewer asks: is this a locked model, or does it continue learning post-deployment? The answer determines the entire change-management story.

Most CE-marked cardiac AI today is locked: the deployed model is fixed, retraining produces a new version, and any significant change requires regulatory notification or a new conformity assessment. This is the safe path and the dominant pattern.

The alternative is a Predetermined Change Control Plan (PCCP), formalised by FDA in 2024, with EU regulators following, in which specific kinds of model updates are pre-authorised within documented bounds. PCCPs let you retrain on new data, adjust thresholds within ranges, or expand to additional conditions without a full new submission, provided the change stays within the pre-authorised envelope.

PCCPs are operationally more demanding but can dramatically reduce the lifetime regulatory cost of a successful product. For any cardiac AI program planning ongoing model improvement post-launch, the PCCP question is now strategic, not technical. Treating it as a regulatory afterthought is a frequent and expensive mistake.

Post-Market Performance Monitoring

Cardiac AI is susceptible to distributional shift: clinical population changes (demographics, comorbidities), equipment changes, coding-practice changes that affect labels, and workflow changes that alter which patients receive ECGs.

A clinical-grade system needs monitoring infrastructure that detects performance degradation before it becomes a patient safety event. The practical components:

Real-world performance tracking. Where outcomes data is accessible — confirmed downstream diagnoses, treatment decisions, patient outcomes — systematic comparison of predictions to outcomes provides direct performance evidence on a sample of post-deployment cases.

Input distribution monitoring. Statistical monitoring of incoming signal characteristics (amplitude distributions, heart rate distributions, lead quality metrics) detects when the device is being used in populations or contexts different from validation. Input shift is a leading indicator of performance shift.

Anomaly rate monitoring. Tracking high-confidence positive rate, uncertain-output rate, and low-quality-input rejection rate provides operational signals that don't require clinical outcome data — useful because clinical outcome data is slow.

Complaint and incident surveillance. A formal system for receiving and investigating clinical complaints (missed diagnoses, unexpected flagging, UI confusions) is required by EU MDR vigilance and is a leading source of real-world performance signal.

Monitoring outputs feed back into the change-management story: triggering retraining decisions inside a PCCP envelope, or formal regulatory submissions for changes outside it.

Cybersecurity and the AI Act Overlay

Two regulatory dimensions have become non-optional for new cardiac AI submissions and are often underweighted by ML-led teams.

Cybersecurity (IEC 81001-5-1 and MDCG 2019-16 guidance). Networked cardiac AI devices face cybersecurity documentation requirements covering threat modelling, secure development lifecycle, vulnerability management, and post-market security monitoring. The expected artefacts are now extensive enough that cybersecurity is a parallel workstream alongside signal processing and clinical evaluation, not a late-stage checkbox.

EU AI Act. Cardiac diagnostic AI is high-risk under the AI Act, in addition to being a Class IIa medical device under MDR. The dual regime adds requirements around training data governance, transparency to deployers, human oversight provisions, and conformity assessment of the AI system specifically (overlapping with but distinct from the MDR conformity assessment). For programs targeting EU launch in 2026 and beyond, AI Act compliance is now a material part of the submission burden.

US programs face the FDA's parallel framework: De Novo or 510(k) pathway depending on predicate availability, FDA guidance on AI/ML SaMD, and the formalised PCCP framework. Most cardiac AI programs serious about commercial scale need both EU and US strategies aligned from the start, not sequenced.

Documentation Burden, Translated

The specific EU MDR documentation for a Class IIa cardiac ECG AI (intended purpose: flagging ECG abnormalities for cardiologist review) includes the intended purpose statement (specific to ECG acquisition type, conditions covered, intended clinical setting, intended user); IEC 62304 documentation for signal processing pipeline, model architecture, training pipeline, and inference engine; ISO 14971 risk management file addressing ECG-specific risks (missed STEMI, lead artifact handling, acquisition variability, distributional shift); IEC 81001-5-1 cybersecurity documentation; clinical evaluation covering literature review, validation study with pre-specified per-condition sensitivity/specificity/AUC, multi-site or external validation evidence, comparison to cardiologist performance where available, and subgroup analysis; usability evaluation (IEC 62366-1) demonstrating that cardiologists correctly interpret outputs and understand limitations; post-market surveillance plan including performance monitoring methodology and PCCP scope where applicable; and AI Act conformity documentation overlapping with MDR.

The documentation phase is where most cardiac AI programs blow their timelines. Teams that under-resource regulatory writing in favour of model improvement consistently end up with a strong model and no path to market. The technical file is the product, in regulatory terms and budgeting it as roughly a quarter of total program effort is realistic.

What This All Means

Building cardiac AI to clinical-grade is not principally an ML engineering problem. The model architecture choices are fairly settled. The hard parts are data acquisition at sufficient breadth and label quality, preprocessing and pipeline discipline, condition-specific validation, calibrated uncertainty, change-management strategy that survives post-launch retraining, and a regulatory documentation effort sized to the actual scope.

The teams that ship are the teams that resource these as parallel workstreams from day one, not the teams that build the model first and then "do the regulatory part."

If you're scoping a cardiac AI program at the strategic level, markets, build/buy/partner decisions, regulatory cost, timeline reality, defensibility, the companion piece is here: The Business Case for Cardiac AI Diagnostics.

If you'd like to pressure-test your program against what we learned building KARDI AI, we run technical due diligence and engagement scoping for cardiac AI teams. Get in touch at vector-labs.ai.